Automating Site Backups with Amazon S3 and PHP

This article was originally written for and published at TechOats on June 24th, 2015. It has been posted here for safe keeping.

I host quite a few websites. Not a lot, but enough that the thought of manually backing them up at any regular interval fills me with dread. If you’re going to do something more than three times, it is worth the effort of scripting it. A while back I got a free trial of Amazon’s Web Services, and decided to give S3 a try. Amazon S3 (standing for Simple Storage Service) allows users to store data, and pay only for the space used as opposed to a flat rate for an arbitrary amount of disk space. S3 is also scalable; you never have to worry about running out of a storage allotment, you get more space automatically.

S3 also has a web services interface, making it an ideal tool for system administrators who want to set it and forget it in an environment they are already comfortable with. As a Linux user, there were a myriad of tools out there already for integrating with S3, and I was able to find one to aide my with my simple backup automation.

First things first, I run my web stack on a CentOS installation. Different Linux distributions may have slightly different utilities (such as package managers), so these instructions may differ on your system. If you see anything along the way that isn’t exactly how you have things set up, take the time and research how to adapt the processes I have outlined.

In Amazon S3, before you back up anything, you need to create a bucket. A bucket is simply a container that you use to store data objects within S3. After logging into the Amazon Web Services Console, you can configure it using the S3 panel and create a new bucket using the button provided. Buckets can have different price points, naming conventions, or physical locations around the world. It is best to read the documentation provided through Amazon to figure out what works best for you, and then create your bucket. For our purposes, any bucket you can create is treated the same and shouldn’t cause any problems depending on what configuration you wish to proceed with.

After I created my bucket, I stumbled across a tool called s3cmd which allows me to interface directly with my bucket within S3.

To install s3cmd, it was as easy as bringing up my console and entering:

sudo yum install s3cmd

The application will install, easy as that.

Now, we need a secret key and an access key from AWS. To get this, visit https://console.aws.amazon.com/iam/home#security_credential and click the plus icon next to Access Keys (Access Key ID and Secret Access Key). Now, you can click the button that states Create New Access Key to generate your keys. They should display in a pop-up on the page. Leave this pop-up open for the time being.

Back to your console, we need to edit s3cmd’s configuration file using your text editor of choice, located in your user’s home directory:

nano ~/.s3cfg

The file you are editing (.s3cfg) needs both the access key and the secret key from that pop-up you saw earlier on the AWS site. Edit the lines beginning with:

access_key = XXXXXXXXXXXX

secret_key = XXXXXXXXXXXX

Replacing each string of “XXXXXXXXXXXX” with your respective access and secret keys from earlier. Then, save the file (CTRL+X in nano, if you are using it).

Now we are ready to write the script to do the backups. For the sake of playing different languages, I chose to write my script using PHP. You could accomplish the same behavior using Python, Bash, Perl, or other languages, though the syntax will differ substantially. First, our script needs a home, so I created a backup directory to house the script and any local backup files I create within my home directory. Then, I changed into that directory and started editing my script using the commands below:

mkdir backup

cd backup/

nano backup.php

Now, we’re going to add some code to our script. I’ll show an example for backing up one site, though you can easily duplicate and modify the code for multiple site backups. Let’s take things a few lines at a time. The first line starts the file. Anything after <?php is recognized as PHP code. The second line sets our time zone. You should use the time zone of your server’s location. It’ll help us in the next few steps.

<?php

date_default_timezone_set('America/New_York');

So now we dump our site’s database by executing the command mysqldump through PHP. If you don’t run MySQL, you’ll have to modify this line to use your database solution. Replace the username, password, and database name on this line as well. This will allow you to successfully backup the database and timestamp it for reference. The following line will archive and compress your database dump using gzip compression. Feel free to use your favorite compression in place of gzip. The last line will delete the original .sql file using PHP’s unlink, since we only need the compressed one.

exec("mysqldump -uUSERNAMEHERE -pPASSWORDHERE DATABASENAMEHERE > ~/backup/sitex.com-".date('Y-m-d').".sql");

exec("tar -zcvf ~/backup/sitex.com-db-".date('Y-m-d').".tar.gz ~/backup/sitex.com-".date('Y-m-d').".sql");

unlink("~/backup/sitex.com-".date('Y-m-d').".sql");

The next line will archive and gzip your site’s web directory. Make sure you check the directory path for your site, you need to know where the site lives on your server.

exec("tar -zcvf ~/backup/sitex.com-dir-".date('Y-m-d').".tar.gz /var/www/public_html/sitex.com");

Now, an optional line. I didn’t want to keep any web directory backups older than three months. This will delete all web directory backups older than that. You can also duplicate and modify this line to remove the database archives, but mine don’t take up too much space, so I keep them around for easy access.

@unlink("~/backup/sitex.com-".date('Y-m-d', strtotime("now -3 month")).".tar.gz");

Now the fun part. These commands will push the backups of your database and web directory to your S3 bucket. Be sure to replace U62 with your bucket name.

exec("s3cmd -v put ~/backup/sitex.com-db-".date('Y-m-d').".tar.gz s3://U62");

exec("s3cmd -v put ~/backup/sitex.com-dir-".date('Y-m-d').".tar.gz s3://U62");

Finally, end the file, closing that initial <?php tag.

?>

Here it is all put together (in only ten lines!):

<?php

date_default_timezone_set('America/New_York');

exec("mysqldump -uUSERNAMEHERE -pPASSWORDHERE DATABASENAMEHERE > ~/backup/sitex.com-".date('Y-m-d').".sql");

exec("tar -zcvf ~/backup/sitex.com-db-".date('Y-m-d').".tar.gz ~/backup/sitex.com-".date('Y-m-d').".sql");

unlink("~/backup/sitex.com-".date('Y-m-d').".sql");

exec("tar -zcvf ~/backup/sitex.com-dir-".date('Y-m-d').".tar.gz /var/www/public_html/sitex.com");

@unlink("~/backup/sitex.com-".date('Y-m-d', strtotime("now -3 month")).".tar.gz");

exec("s3cmd -v put ~/backup/sitex.com-db-".date('Y-m-d').".tar.gz s3://U62");

exec("s3cmd -v put ~/backup/sitex.com-dir-".date('Y-m-d').".tar.gz s3://U62");

?>

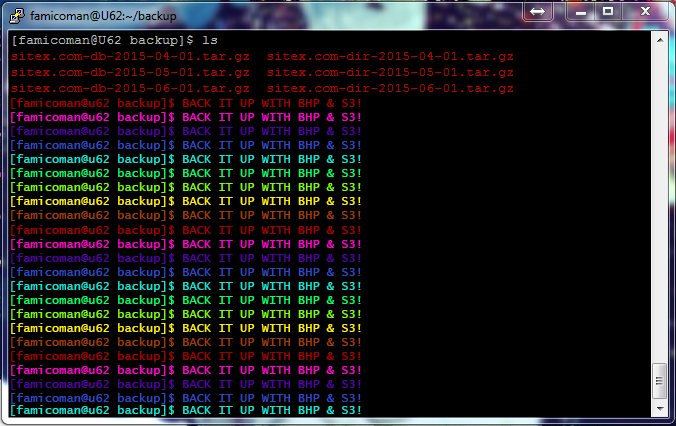

Okay, now our script is finalized. You should now save it and run it with the command below in your console to test it out!

php backup.php

Provided you edited all the paths and values properly, your script should push the two files to S3! Give yourself a pat on the back, but don’t celebrate just yet. We don’t want to have to run this on demand every time we want a backup. Luckily we can automate the backup process. Back in your console, run the following command:

crontab -e

This will load up your crontab, allowing you to add jobs to Cron: a time based scheduler. The syntax of Cron commands is out of the scope of this article, but the information is abundant online. You can add the line below to your crontab (pay attention to edit the path of your script) and save it so it will run on the first of every month.

0 0 1 * * /usr/bin/php /home/famicoman/backup/backup.php

That’s it! Your backup job is scheduled to run every month until you tell it not to. Of course, you can infinitely customize this to run more often, less often, or however you prefer. I’ve been running this configuration for nearly four years and have never had a hiccup.

Backing up to Amazon S3 can be done easily and quickly, allowing you to create and store backups for years to come. Depending on the size of your installations, frequency of your backups, and thickness of your wallet, S3 may not be for you. However, for a small operation and at pennies a month, you can get up and running with Amazon S3 in a matter of minutes to add another layer redundancy for your important data.